Kubernetes clusters (FREE)

- Introduced in GitLab 10.1 for projects.

- Introduced in GitLab 11.6 for groups.

- Introduced in GitLab 11.11 for instances.

Using the GitLab project Kubernetes integration, you can:

- Use Review Apps.

- Run pipelines.

- Deploy your applications.

- Detect and monitor Kubernetes.

- Use it with Auto DevOps.

- Use Web terminals.

- Use Deploy Boards.

- Use Canary Deployments. (PREMIUM)

- Use deployment variables.

- Use role-based or attribute-based access controls.

- View Logs.

- Run serverless workloads on Kubernetes with Knative.

Besides integration at the project level, Kubernetes clusters can also be integrated at the group level or GitLab instance level.

To view your project level Kubernetes clusters, navigate to Operations > Kubernetes from your project. On this page, you can add a new cluster and view information about your existing clusters, such as:

- Nodes count.

- Rough estimates of memory and CPU usage.

Setting up

Supported cluster versions

GitLab is committed to support at least two production-ready Kubernetes minor versions at any given time. We regularly review the versions we support, and provide a three-month deprecation period before we remove support of a specific version. The range of supported versions is based on the evaluation of:

- The versions supported by major managed Kubernetes providers.

- The versions supported by the Kubernetes community.

GitLab supports the following Kubernetes versions, and you can upgrade your Kubernetes version to any supported version at any time:

- 1.19 (support ends on February 22, 2022)

- 1.18 (support ends on November 22, 2021)

- 1.17 (support ends on September 22, 2021)

- 1.16 (support ends on July 22, 2021)

- 1.15 (support ends on May 22, 2021)

Some GitLab features may support versions outside the range provided here.

Adding and removing clusters

See Adding and removing Kubernetes clusters for details on how to:

- Create a cluster in Google Cloud Platform (GCP) or Amazon Elastic Kubernetes Service (EKS) using the GitLab UI.

- Add an integration to an existing cluster from any Kubernetes platform.

Multiple Kubernetes clusters

- Introduced in GitLab Premium 10.3

- Moved to GitLab Free in 13.2.

You can associate more than one Kubernetes cluster to your project. That way you can have different clusters for different environments, like development, staging, production, and so on. Add another cluster, like you did the first time, and make sure to set an environment scope that differentiates the new cluster from the rest.

Setting the environment scope

When adding more than one Kubernetes cluster to your project, you need to differentiate them with an environment scope. The environment scope associates clusters with environments similar to how the environment-specific CI/CD variables work.

The default environment scope is *, which means all jobs, regardless of their

environment, use that cluster. Each scope can be used only by a single cluster

in a project, and a validation error occurs if otherwise. Also, jobs that don't

have an environment keyword set can't access any cluster.

For example, let's say the following Kubernetes clusters exist in a project:

| Cluster | Environment scope |

|---|---|

| Development | * |

| Production | production |

And the following environments are set in

.gitlab-ci.yml:

stages:

- test

- deploy

test:

stage: test

script: sh test

deploy to staging:

stage: deploy

script: make deploy

environment:

name: staging

url: https://staging.example.com/

deploy to production:

stage: deploy

script: make deploy

environment:

name: production

url: https://example.com/The results:

- The Development cluster details are available in the

deploy to stagingjob. - The production cluster details are available in the

deploy to productionjob. - No cluster details are available in the

testjob because it doesn't define any environment.

Configuring your Kubernetes cluster

After adding a Kubernetes cluster to GitLab, read this section that covers important considerations for configuring Kubernetes clusters with GitLab.

Security implications

WARNING: The whole cluster security is based on a model where developers are trusted, so only trusted users should be allowed to control your clusters.

The default cluster configuration grants access to a wide set of functionalities needed to successfully build and deploy a containerized application. Bear in mind that the same credentials are used for all the applications running on the cluster.

GitLab-managed clusters

- Introduced in GitLab 11.5.

- Became optional in GitLab 11.11.

You can choose to allow GitLab to manage your cluster for you. If your cluster is managed by GitLab, resources for your projects are automatically created. See the Access controls section for details about the created resources.

If you choose to manage your own cluster, project-specific resources aren't created

automatically. If you are using Auto DevOps, you must

explicitly provide the KUBE_NAMESPACE deployment variable

for your deployment jobs to use. Otherwise, a namespace is created for you.

Important notes

Note the following with GitLab and clusters:

- If you install applications on your cluster, GitLab will create the resources required to run these even if you have chosen to manage your own cluster.

- Be aware that manually managing resources that have been created by GitLab, like namespaces and service accounts, can cause unexpected errors. If this occurs, try clearing the cluster cache.

Clearing the cluster cache

Introduced in GitLab 12.6.

If you allow GitLab to manage your cluster, GitLab stores a cached version of the namespaces and service accounts it creates for your projects. If you modify these resources in your cluster manually, this cache can fall out of sync with your cluster. This can cause deployment jobs to fail.

To clear the cache:

- Navigate to your project's Operations > Kubernetes page, and select your cluster.

- Expand the Advanced settings section.

- Click Clear cluster cache.

Base domain

Introduced in GitLab 11.8.

You do not need to specify a base domain on cluster settings when using GitLab Serverless. The domain in that case is specified as part of the Knative installation. See Installing Applications.

Specifying a base domain automatically sets KUBE_INGRESS_BASE_DOMAIN as an deployment variable.

If you are using Auto DevOps, this domain is used for the different

stages. For example, Auto Review Apps and Auto Deploy.

The domain should have a wildcard DNS configured to the Ingress IP address. After Ingress has been installed (see Installing Applications), you can either:

- Create an

Arecord that points to the Ingress IP address with your domain provider. - Enter a wildcard DNS address using a service such as nip.io or xip.io. For example,

192.168.1.1.xip.io.

To determine the external Ingress IP address, or external Ingress hostname:

-

If the cluster is on GKE:

- Click the Google Kubernetes Engine link in the Advanced settings, or go directly to the Google Kubernetes Engine dashboard.

- Select the proper project and cluster.

- Click Connect

- Execute the

gcloudcommand in a local terminal or using the Cloud Shell.

-

If the cluster is not on GKE: Follow the specific instructions for your Kubernetes provider to configure

kubectlwith the right credentials. The output of the following examples show the external endpoint of your cluster. This information can then be used to set up DNS entries and forwarding rules that allow external access to your deployed applications.

Depending an your Ingress, the external IP address can be retrieved in various ways. This list provides a generic solution, and some GitLab-specific approaches:

-

In general, you can list the IP addresses of all load balancers by running:

kubectl get svc --all-namespaces -o jsonpath='{range.items[?(@.status.loadBalancer.ingress)]}{.status.loadBalancer.ingress[*].ip} ' -

If you installed Ingress using the Applications, run:

kubectl get service --namespace=gitlab-managed-apps ingress-nginx-ingress-controller -o jsonpath='{.status.loadBalancer.ingress[0].ip}' -

Some Kubernetes clusters return a hostname instead, like Amazon EKS. For these platforms, run:

kubectl get service --namespace=gitlab-managed-apps ingress-nginx-ingress-controller -o jsonpath='{.status.loadBalancer.ingress[0].hostname}'If you use EKS, an Elastic Load Balancer is also created, which incurs additional AWS costs.

-

Istio/Knative uses a different command. Run:

kubectl get svc --namespace=istio-system istio-ingressgateway -o jsonpath='{.status.loadBalancer.ingress[0].ip} '

If you see a trailing % on some Kubernetes versions, do not include it.

Installing applications

GitLab can install and manage some applications like Helm, GitLab Runner, Ingress, Prometheus, and so on, in your project-level cluster. For more information on installing, upgrading, uninstalling, and troubleshooting applications for your project cluster, see GitLab Managed Apps.

Auto DevOps

Auto DevOps automatically detects, builds, tests, deploys, and monitors your applications.

To make full use of Auto DevOps (Auto Deploy, Auto Review Apps, and Auto Monitoring) the Kubernetes project integration must be enabled. However, Kubernetes clusters can be used without Auto DevOps.

Deploying to a Kubernetes cluster

A Kubernetes cluster can be the destination for a deployment job. If

- The cluster is integrated with GitLab, special

deployment variables are made available to your job

and configuration is not required. You can immediately begin interacting with

the cluster from your jobs using tools such as

kubectlorhelm. - You don't use the GitLab cluster integration, you can still deploy to your cluster. However, you must configure Kubernetes tools yourself using CI/CD variables before you can interact with the cluster from your jobs.

Deployment variables

Deployment variables require a valid Deploy Token named

gitlab-deploy-token, and the

following command in your deployment job script, for Kubernetes to access the registry:

-

Using Kubernetes 1.18+:

kubectl create secret docker-registry gitlab-registry --docker-server="$CI_REGISTRY" --docker-username="$CI_DEPLOY_USER" --docker-password="$CI_DEPLOY_PASSWORD" --docker-email="$GITLAB_USER_EMAIL" -o yaml --dry-run=client | kubectl apply -f - -

Using Kubernetes <1.18:

kubectl create secret docker-registry gitlab-registry --docker-server="$CI_REGISTRY" --docker-username="$CI_DEPLOY_USER" --docker-password="$CI_DEPLOY_PASSWORD" --docker-email="$GITLAB_USER_EMAIL" -o yaml --dry-run | kubectl apply -f -

The Kubernetes cluster integration exposes these deployment variables in the GitLab CI/CD build environment to deployment jobs. Deployment jobs have defined a target environment.

| Deployment Variable | Description |

|---|---|

KUBE_URL |

Equal to the API URL. |

KUBE_TOKEN |

The Kubernetes token of the environment service account. Prior to GitLab 11.5, KUBE_TOKEN was the Kubernetes token of the main service account of the cluster integration. |

KUBE_NAMESPACE |

The namespace associated with the project's deployment service account. In the format <project_name>-<project_id>-<environment>. For GitLab-managed clusters, a matching namespace is automatically created by GitLab in the cluster. If your cluster was created before GitLab 12.2, the default KUBE_NAMESPACE is set to <project_name>-<project_id>. |

KUBE_CA_PEM_FILE |

Path to a file containing PEM data. Only present if a custom CA bundle was specified. |

KUBE_CA_PEM |

(deprecated) Raw PEM data. Only if a custom CA bundle was specified. |

KUBECONFIG |

Path to a file containing kubeconfig for this deployment. CA bundle would be embedded if specified. This configuration also embeds the same token defined in KUBE_TOKEN so you likely need only this variable. This variable name is also automatically picked up by kubectl so you don't need to reference it explicitly if using kubectl. |

KUBE_INGRESS_BASE_DOMAIN |

From GitLab 11.8, this variable can be used to set a domain per cluster. See cluster domains for more information. |

Custom namespace

- Introduced in GitLab 12.6.

- An option to use project-wide namespaces was added in GitLab 13.5.

The Kubernetes integration provides a KUBECONFIG with an auto-generated namespace

to deployment jobs. It defaults to using project-environment specific namespaces

of the form <prefix>-<environment>, where <prefix> is of the form

<project_name>-<project_id>. To learn more, read Deployment variables.

You can customize the deployment namespace in a few ways:

- You can choose between a namespace per environment or a namespace per project. A namespace per environment is the default and recommended setting, as it prevents the mixing of resources between production and non-production environments.

- When using a project-level cluster, you can additionally customize the namespace prefix.

When using namespace-per-environment, the deployment namespace is

<prefix>-<environment>, but otherwise just<prefix>. - For non-managed clusters, the auto-generated namespace is set in the

KUBECONFIG, but the user is responsible for ensuring its existence. You can fully customize this value usingenvironment:kubernetes:namespacein.gitlab-ci.yml.

When you customize the namespace, existing environments remain linked to their current namespaces until you clear the cluster cache.

WARNING:

By default, anyone who can create a deployment job can access any CI/CD variable in

an environment's deployment job. This includes KUBECONFIG, which gives access to

any secret available to the associated service account in your cluster.

To keep your production credentials safe, consider using

protected environments,

combined with either

- a GitLab-managed cluster and namespace per environment,

- or, an environment-scoped cluster per protected environment. The same cluster can be added multiple times with multiple restricted service accounts.

Integrations

Canary Deployments

Leverage Kubernetes' Canary deployments and visualize your canary deployments right inside the Deploy Board, without the need to leave GitLab.

Read more about Canary Deployments

Deploy Boards

GitLab Deploy Boards offer a consolidated view of the current health and status of each CI environment running on Kubernetes. They display the status of the pods in the deployment. Developers and other teammates can view the progress and status of a rollout, pod by pod, in the workflow they already use without any need to access Kubernetes.

Viewing pod logs

GitLab enables you to view the logs of running pods in connected Kubernetes clusters. By displaying the logs directly in GitLab, developers can avoid having to manage console tools or jump to a different interface.

Read more about Kubernetes logs

Web terminals

Introduced in GitLab 8.15.

When enabled, the Kubernetes integration adds web terminal

support to your environments. This is based

on the exec functionality found in Docker and Kubernetes, so you get a new

shell session in your existing containers. To use this integration, you

should deploy to Kubernetes using the deployment variables above, ensuring any

deployments, replica sets, and pods are annotated with:

app.gitlab.com/env: $CI_ENVIRONMENT_SLUGapp.gitlab.com/app: $CI_PROJECT_PATH_SLUG

$CI_ENVIRONMENT_SLUG and $CI_PROJECT_PATH_SLUG are the values of

the CI/CD variables.

You must be the project owner or have maintainer permissions to use terminals.

Support is limited to the first container in the first pod of your environment.

Troubleshooting

Before the deployment jobs starts, GitLab creates the following specifically for the deployment job:

- A namespace.

- A service account.

However, sometimes GitLab can not create them. In such instances, your job can fail with the message:

This job failed because the necessary resources were not successfully created.To find the cause of this error when creating a namespace and service account, check the logs.

Reasons for failure include:

- The token you gave GitLab does not have

cluster-adminprivileges required by GitLab. - Missing

KUBECONFIGorKUBE_TOKENdeployment variables. To be passed to your job, they must have a matchingenvironment:name. If your job has noenvironment:nameset, the Kubernetes credentials are not passed to it.

NOTE: Project-level clusters upgraded from GitLab 12.0 or older may be configured in a way that causes this error. Ensure you deselect the GitLab-managed cluster option if you want to manage namespaces and service accounts yourself.

Monitoring your Kubernetes cluster

Automatically detect and monitor Kubernetes metrics. Automatic monitoring of NGINX Ingress is also supported.

Read more about Kubernetes monitoring

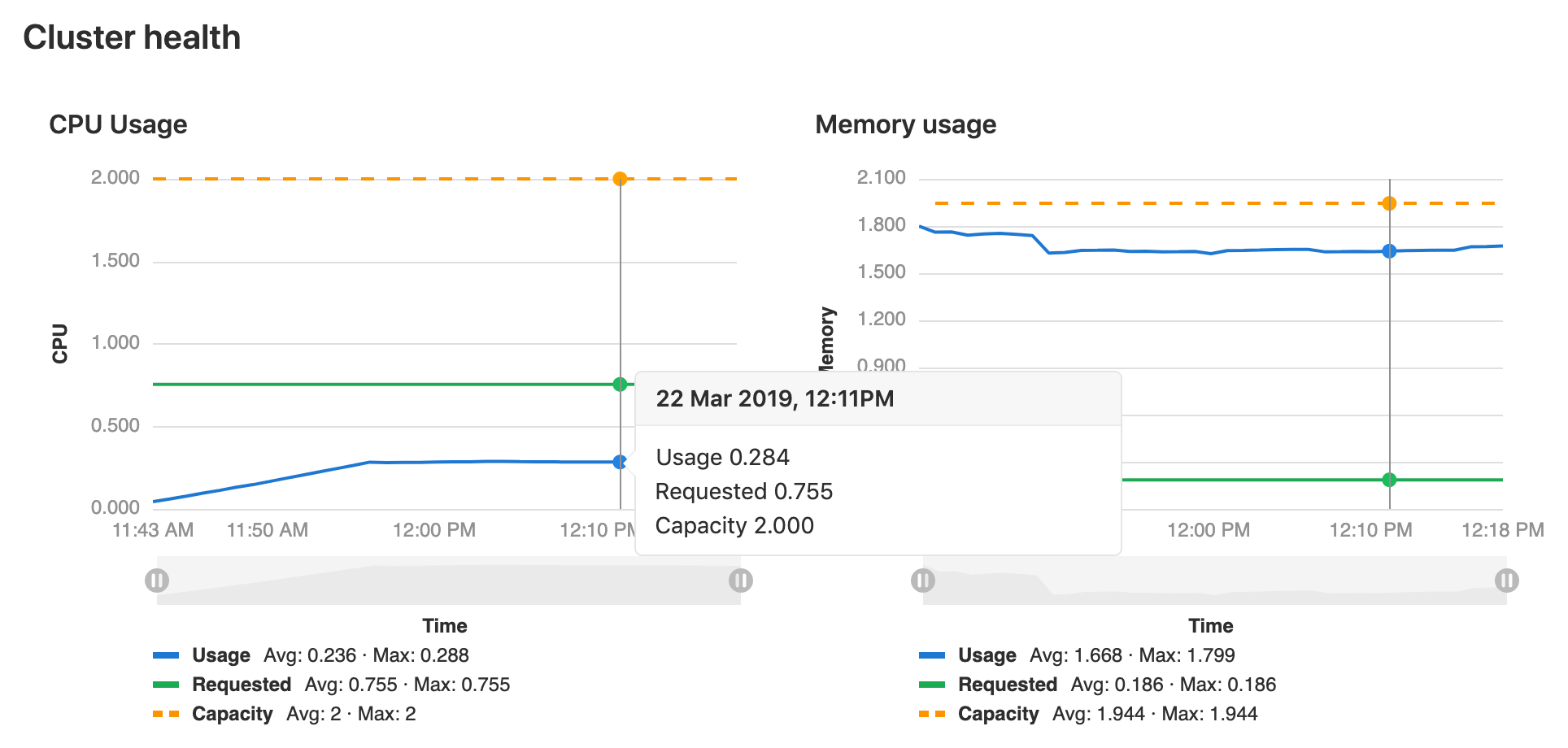

Visualizing cluster health

- Introduced in GitLab Ultimate 10.6.

- Moved to GitLab Free in 13.2.

When Prometheus is deployed, GitLab monitors the cluster's health. At the top of the cluster settings page, CPU and Memory utilization is displayed, along with the total amount available. Keeping an eye on cluster resources can be important, if the cluster runs out of memory pods may be shutdown or fail to start.